Mario-Leander Reimer

Fifty Shades of Kubernetes Autoscaling

#1about 4 minutes

Why cloud-native systems require multi-layered elasticity

Modern applications need to be anti-fragile and support hyperscale, which requires elasticity at the workload level (horizontal/vertical) and the infrastructure level (cluster scaling).

#2about 5 minutes

How metrics and events drive Kubernetes autoscaling decisions

Autoscaling relies on events for cluster-level actions and a multi-layered metrics API for workload scaling based on resource, custom, or external data sources.

#3about 5 minutes

Implementing horizontal pod autoscaling with different metrics

The Horizontal Pod Autoscaler (HPA) can scale pods based on simple resource metrics like CPU, custom pod metrics, or external metrics from Prometheus.

#4about 2 minutes

Using the vertical pod autoscaler for right-sizing workloads

The Vertical Pod Autoscaler (VPA) can automatically adjust pod resources, but its recommendation mode is most useful for determining optimal CPU and memory settings.

#5about 4 minutes

How the default cluster autoscaler works on GKE

The default cluster autoscaler automatically provisions new nodes when it detects unschedulable pods due to resource constraints, as demonstrated on Google Kubernetes Engine.

#6about 5 minutes

Using Carpenter for fast and flexible cluster scaling on AWS

Carpenter provides a fast and flexible cluster autoscaling solution for AWS EKS, enabling cost optimization by using spot instances for scaled-out nodes.

#7about 1 minute

Exploring KEDA for advanced event-driven autoscaling

KEDA (Kubernetes Event-driven Autoscaling) enables scaling workloads, including to zero, based on events from various sources like message queues or databases.

#8about 1 minute

Summary of Kubernetes autoscaling tools and techniques

A recap of essential autoscaling components including the metric server, HPA, VPA, cluster autoscalers like Carpenter, KEDA, and the descheduler for cluster optimization.

#9about 2 minutes

Q&A on autoscaler reliability and graceful shutdown

Discussion on the production-readiness of autoscalers, the importance of observability, and how to achieve graceful pod termination during scale-down events.

Related jobs

Jobs that call for the skills explored in this talk.

Matching moments

01:55 MIN

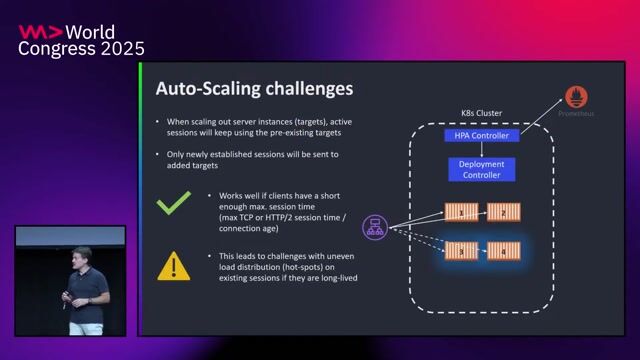

Why autoscaling gRPC services can be challenging

gRPC Load Balancing Deep Dive

Unlock full access

Log in or set up an account to access this feature and more.

02:45 MIN

Understanding the challenges of scaling Kubernetes with confidence

5 steps for running a Kubernetes environment at scale

Unlock full access

Log in or set up an account to access this feature and more.

01:54 MIN

Scaling inference with Kubernetes and smart routing

Unveiling the Magic: Scaling Large Language Models to Serve Millions

Unlock full access

Log in or set up an account to access this feature and more.

07:34 MIN

Live demo of an auto-scaling event-driven application

Serverless Java in Action: Cloud Agnostic Design Patterns and Tips

Unlock full access

Log in or set up an account to access this feature and more.

03:08 MIN

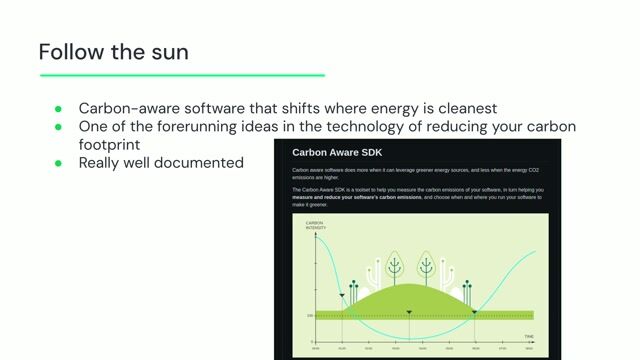

Case study on optimizing a GKE cluster

Minimising the Carbon Footprint of Workloads

Unlock full access

Log in or set up an account to access this feature and more.

04:27 MIN

How application scaling works in Cloud Foundry

CD2CF - Continuous Deployment to Cloud Foundry

Unlock full access

Log in or set up an account to access this feature and more.

00:57 MIN

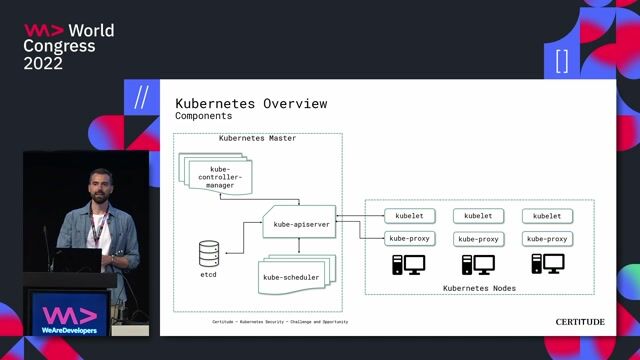

Managing containers at scale with Kubernetes

#90DaysOfDevOps - The DevOps Learning Journey

Unlock full access

Log in or set up an account to access this feature and more.

03:09 MIN

Common challenges when scaling self-hosted runners

A deep dive into ARC the Kubernetes operator to scale self-hosted runners

Unlock full access

Log in or set up an account to access this feature and more.

Featured Partners

Related Videos

42:45

42:45Kubernetes Security - Challenge and Opportunity

Marc Nimmerrichter

21:43

21:43Operating etcd for Managed Kubernetes

Mario Valderrama

46:36

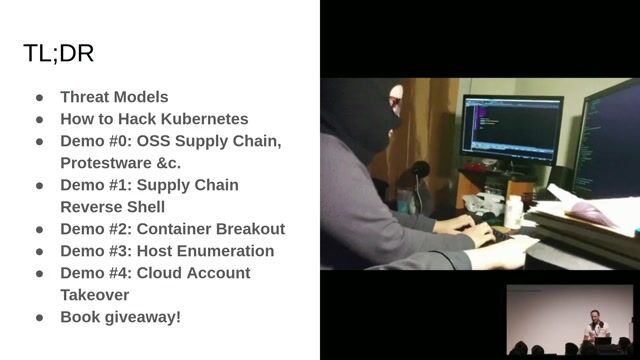

46:36Hacking Kubernetes: Live Demo Marathon

Andrew Martin

50:51

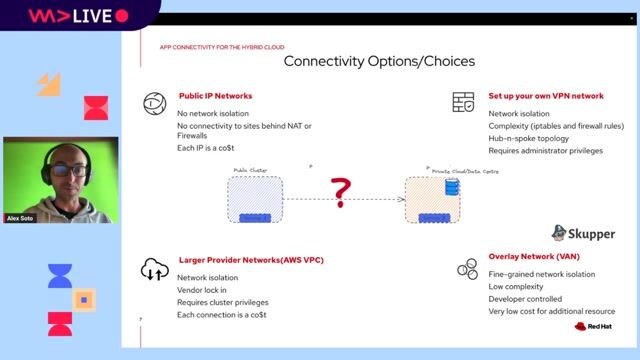

50:51Embracing the Hybrid Cloud: Unlocking Success with Open Source Technologies

Alex Soto

28:12

28:12Scaling: from 0 to 20 million users

Josip Stuhli

57:09

57:095 steps for running a Kubernetes environment at scale

Stijn Polfliet

28:03

28:03Containers in the cloud - State of the Art in 2022

Federico Fregosi

40:00

40:00Local Development Techniques with Kubernetes

Rob Richardson

Related Articles

View all articles

.gif?w=240&auto=compress,format)

From learning to earning

Jobs that call for the skills explored in this talk.

smartclip Europe GmbH

Hamburg, Germany

Intermediate

Senior

GIT

Linux

Python

Kubernetes

Mittwald CM Service GmbH & Co. KG

Espelkamp, Germany

Intermediate

Senior

Linux

Docker

DevOps

Kubernetes

iits-consulting GmbH

Munich, Germany

Intermediate

Go

Docker

DevOps

Kubernetes

AUTO1 Group SE

Berlin, Germany

Intermediate

Senior

ELK

Terraform

Elasticsearch